Competitive analysis in AI ecosystems is fundamentally different from traditional SEO benchmarking.

In Google, you analyze ranking position.

In AI systems, you analyze inclusion probability, narrative dominance, and displacement risk.

LLMs do not “rank pages” - they synthesize recommendations based on probabilistic associations. This changes the nature of competitive intelligence entirely.

When a user asks:

- “What is the best AI visibility platform?”

- “Top AI brand monitoring tools for enterprise”

- “Which platform leads in generative optimization?”

The answer is constructed dynamically. The brands included are not just ranked - they are chosen.

That distinction matters.

1. Competitive Inclusion Mapping

The first layer of AI competitive analysis is structured prompt mapping.

You must track competitors across:

- Category prompts

- Enterprise prompts

- Comparative prompts

- Technical prompts

Competitive Prompt Benchmark Table

| Prompt | Your Brand | Competitor A | Competitor B | Sensitivity Level |

|---|---|---|---|---|

| Best AI visibility platform | 2nd | 1st | 3rd | High |

| Enterprise AI positioning tool | 1st | Not listed | 2nd | Medium |

| AI competitive analysis software | Not listed | 1st | 2nd | High |

| Generative optimization tools | 3rd | 2nd | 1st | High |

This is where many teams realize something uncomfortable:

They appear in generic prompts, but disappear in enterprise evaluation prompts.

And those evaluation prompts are where revenue is decided.

Advanced teams centralize this monitoring inside an AI Visibility Platform to track structured prompt libraries across multiple models.

Without systematized tracking, competitive shifts go unnoticed.

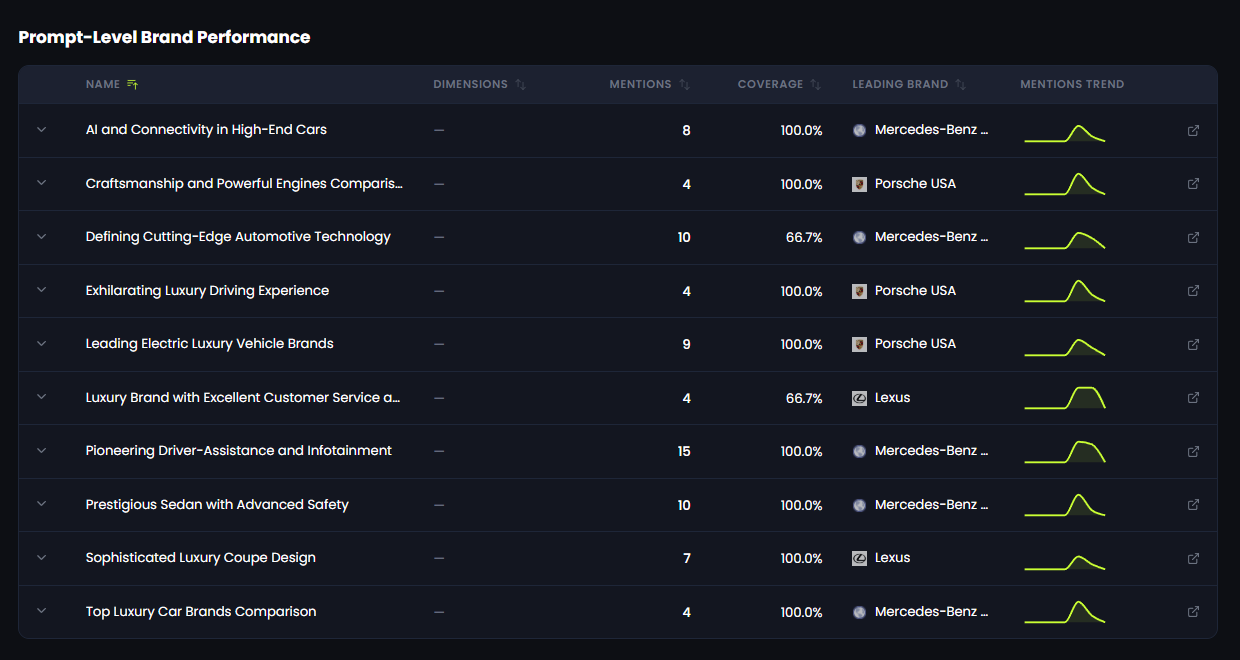

Prompt-Level Competitive Distribution in Practice

The benchmark framework above outlines how competitive inclusion should be structured.

But strategic volatility only becomes visible when analysis moves to the prompt level.

The snapshot below demonstrates how leadership shifts across selected high-intent queries — revealing intent-specific dominance patterns that aggregate metrics often conceal.

This level of structured High-Intent Prompt Monitoring is powered by the 42A AI Visibility Platform, enabling organizations to detect competitive displacement before it impacts revenue.

Prompt-level competitive dominance across selected high-intent queries.

Data generated within the 42A AI Visibility Platform.

This distribution highlights a critical competitive reality:

Leadership in AI answers is intent-dependent.

A brand may dominate innovation-driven prompts while losing authority in service-oriented or luxury comparison queries. These micro-shifts compound into strategic displacement over time.

Without structured prompt-level monitoring, competitive erosion happens invisibly — until it affects pipeline performance.

2. Weighted Position Dominance

Position inside AI answers strongly impacts perceived authority.

Being mentioned first is not cosmetic - it creates cognitive bias.

Authority Weight Model

| Position | Relative Authority Influence |

|---|---|

| 1st Mention | ~45% dominance |

| 2nd Mention | ~28% |

| 3rd Mention | ~15% |

| Beyond 3 | Marginal |

A competitor that consistently appears second may seem “close,” but weighted dominance shows otherwise.

Small positional gaps compound perception over time.

3. Narrative Framing Differential

LLMs do not merely list competitors - they describe them.

Consider the difference:

- “Leading enterprise platform”

- “Emerging AI startup”

- “Affordable alternative”

- “Specialized solution”

These descriptors shape perception before the user ever clicks anything.

Framing Differential Matrix

| Brand | Dominant Descriptor | Risk Assessment |

|---|---|---|

| Your Brand | Enterprise-grade | Stable |

| Competitor A | Category leader | Strategic threat |

| Competitor B | Cost-efficient | Pricing pressure |

If a competitor consistently receives stronger authority descriptors, it indicates stronger narrative embedding.

4. Replacement & Displacement Risk

One of the most revealing AI metrics is replacement behavior.

If your brand disappears from a response, who fills the slot?

Displacement Risk Table

| Scenario | Strategic Implication |

|---|---|

| Same competitor replaces you | Authority imbalance |

| Rotational competitor pool | Fragmented category |

| No clear replacement | Opportunity |

Consistent displacement is not random. It signals structural authority asymmetry.

Tracking this requires structured Cross-LLM Competitive Analysis across models.

5. Cross-Model Variance

Competitive dominance is rarely uniform.

| Model | Dominant Brand | Stability |

|---|---|---|

| ChatGPT | Competitor A | Stable |

| Gemini | Mixed | Volatile |

| Claude | Your Brand | Strong |

| Perplexity | Competitor B | Moderate |

If dominance varies heavily by model, that signals ecosystem-specific embedding differences.

Strong AI visibility strategy focuses on cross-model stability, not isolated wins.

6. Human Insight Layer

Here’s the human part most dashboards miss:

Competitive AI analysis isn’t just about numbers.

It’s about narrative momentum.

If competitors appear in:

- Industry reports

- Thought leadership pieces

- Conference recaps

- Comparison frameworks

They are feeding the model continuously.

AI visibility is not manipulated - it is reinforced.

Strategic Conclusion

AI competitive analysis is about probability architecture.

You are not competing for rank.

You are competing for inclusion, authority framing, and stability across models.

Brands that build structured competitive intelligence loops will not react to AI shifts.

They will anticipate them.

Written by

Eyal Fadlon

Growth marketing specialist